Overview

The Metrics view helps you understand both the current health of an Iceberg table and the impact of optimization runs.

There are two types of metrics:

- Table Metrics – always available for a table, showing its current state.

- Run Metrics – measurements for a single optimization run: input vs output data and delete files (counts and sizes) for that run.

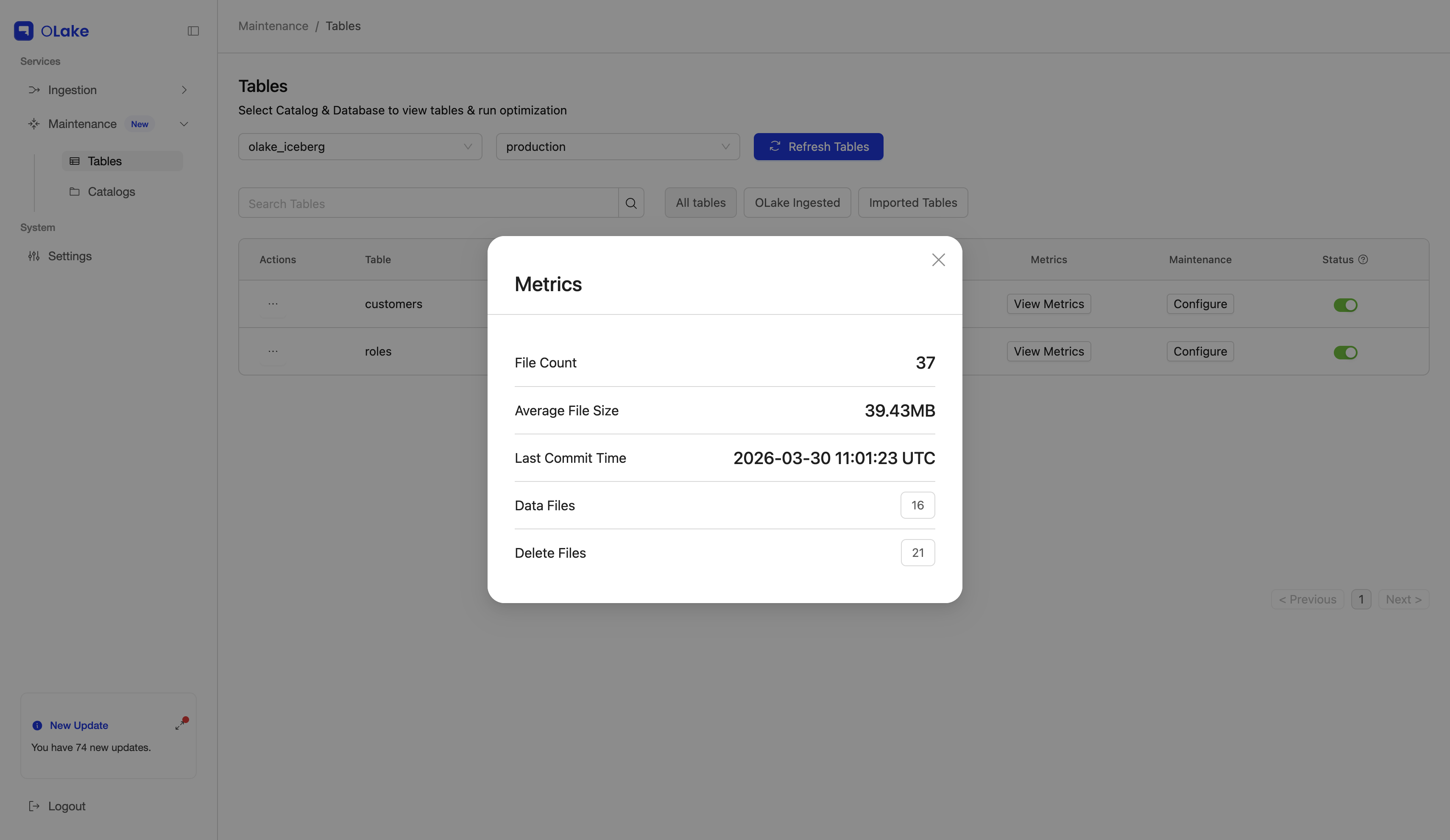

Table Metrics

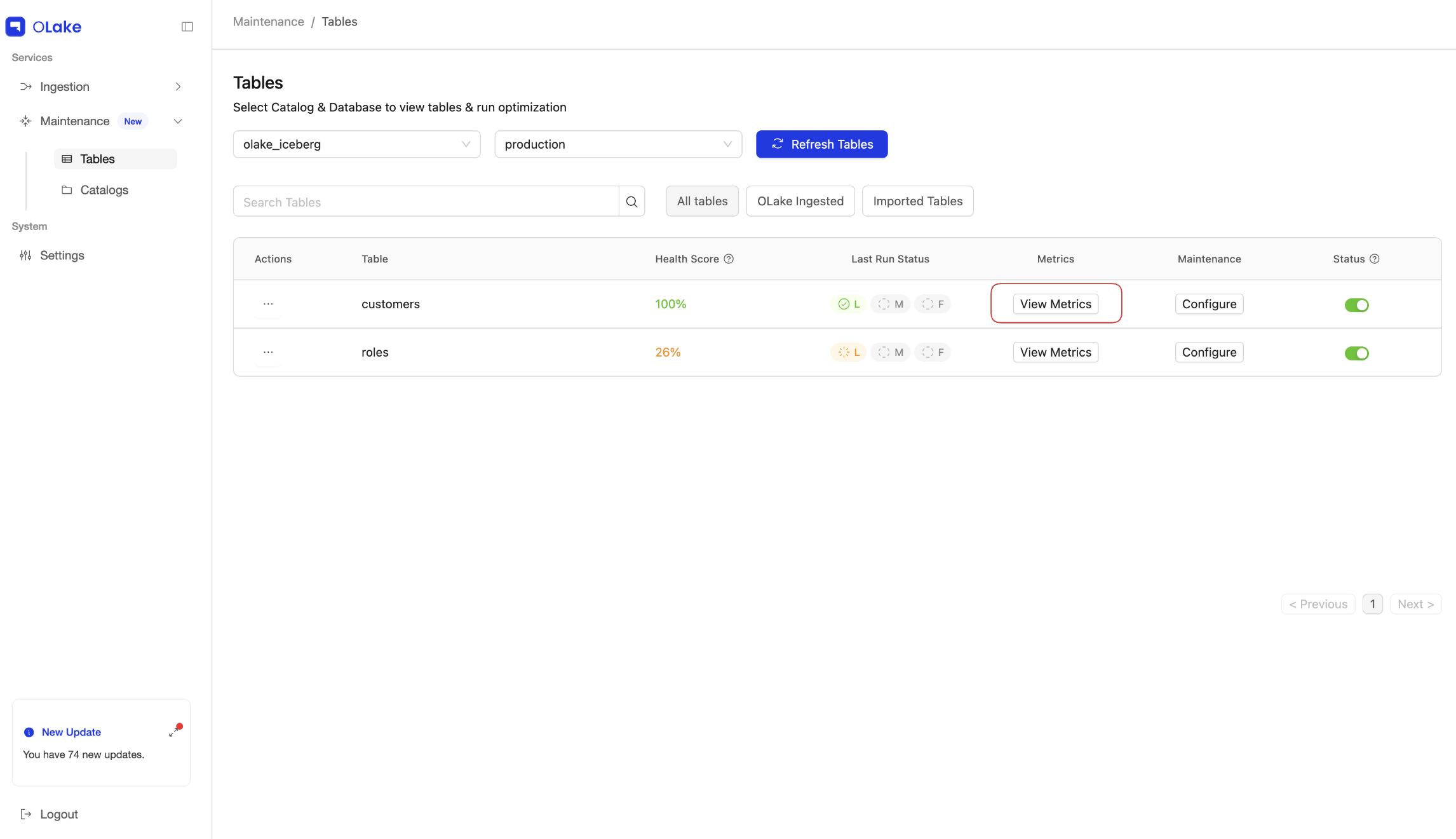

You can see table-level metrics from the Tables page by clicking the View Metrics button for a table.

These metrics describe the current layout and size of the Iceberg table:

| Metric | Description |

|---|---|

| File Count | Total number of data and delete files that currently make up the table. |

| Total Size | Combined on-disk size of all files that belong to the table. |

| Average File Size | Average size of files; useful to see if the table has many small files. |

| Last Commit Time | Time of the last successful Iceberg commit for the table. |

| Data Files Count | Number of data files that store actual table rows. |

| Delete Files Count | Number of delete files(equality+position) that track removed or filtered-out rows. |

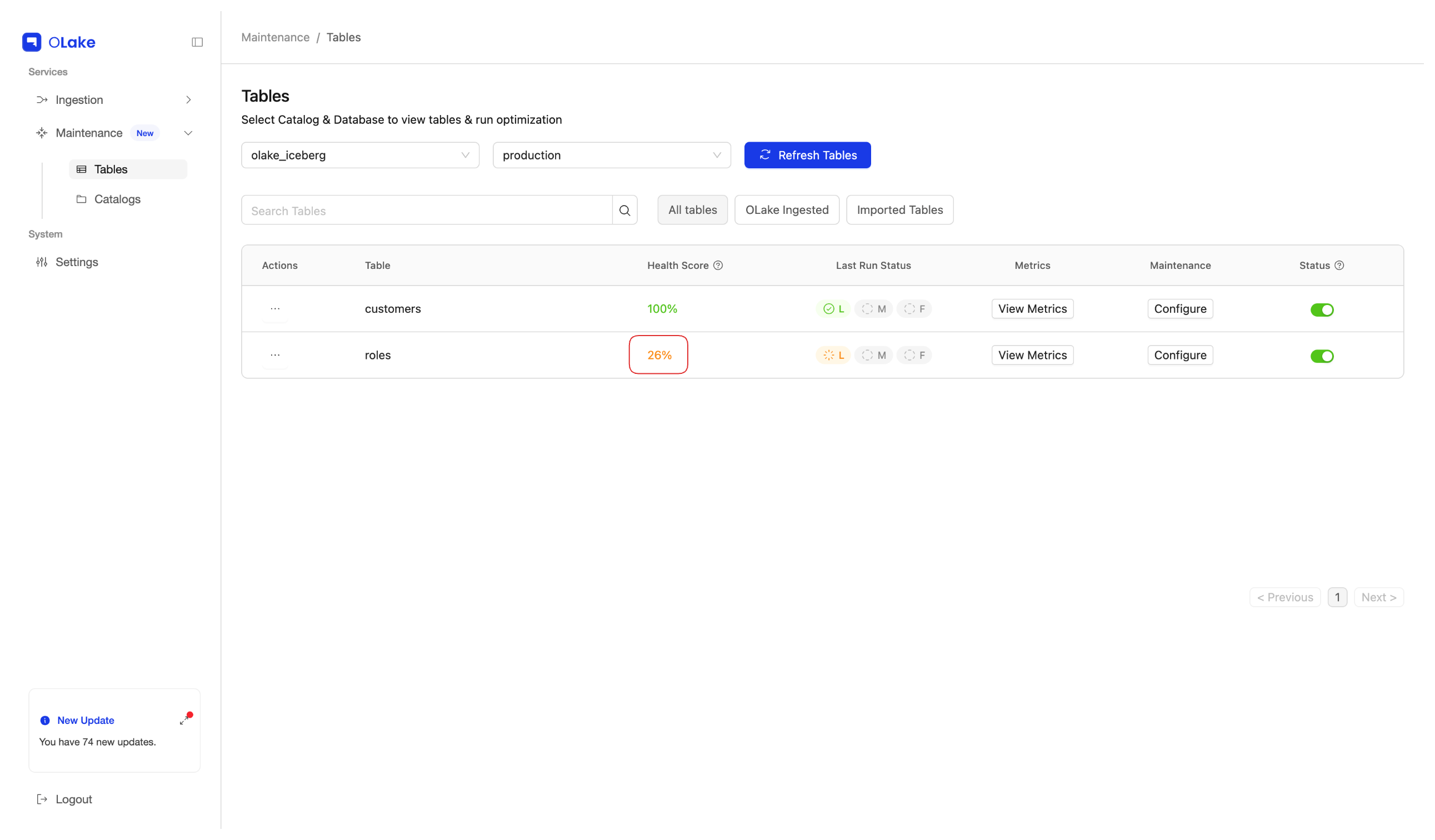

| Health Score | Combined score based on Small Files Score, Eq Delete Score, and Pos Delete Score. |

Health Score appears in the Tables page, not in the View Metrics modal.

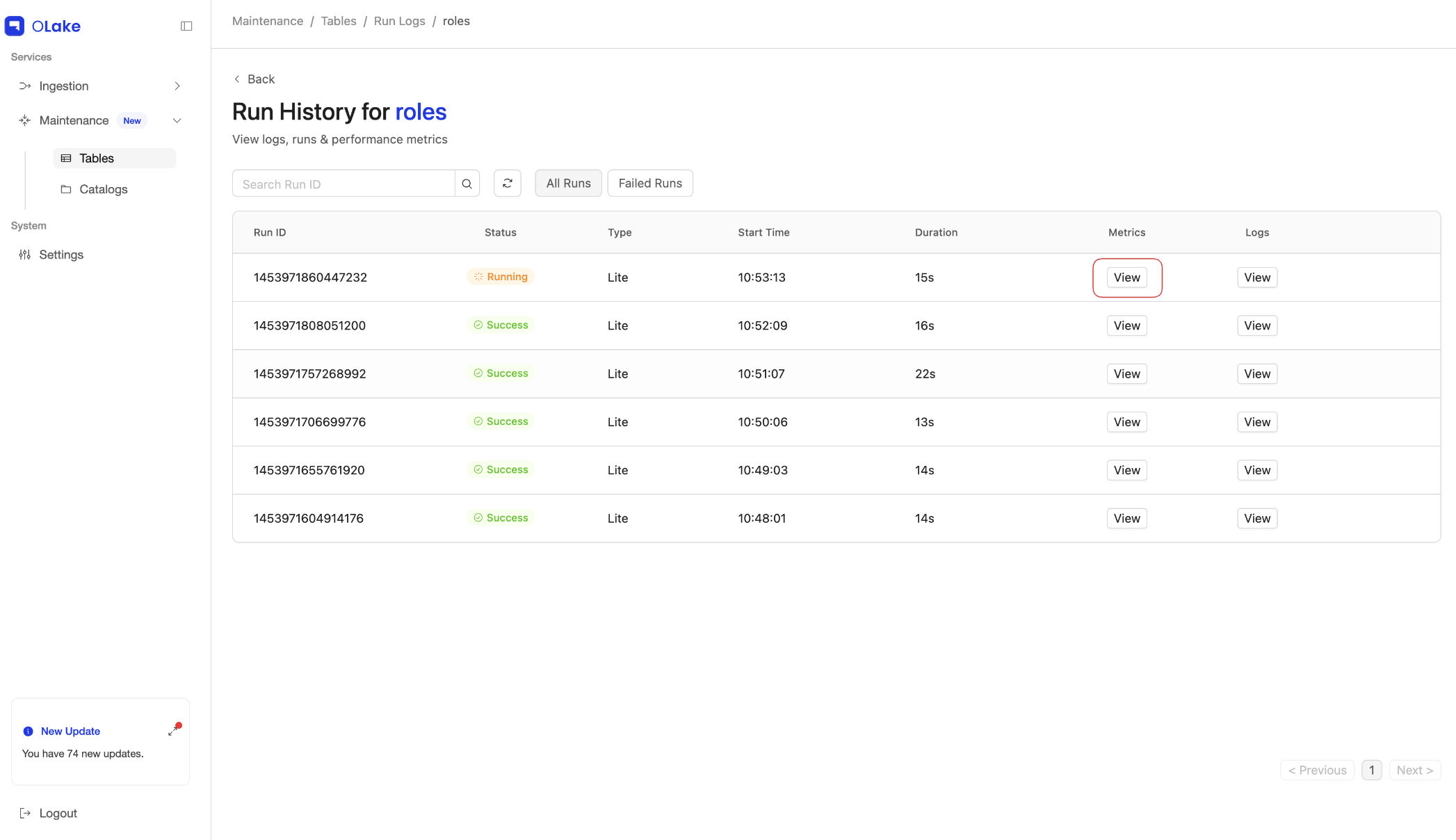

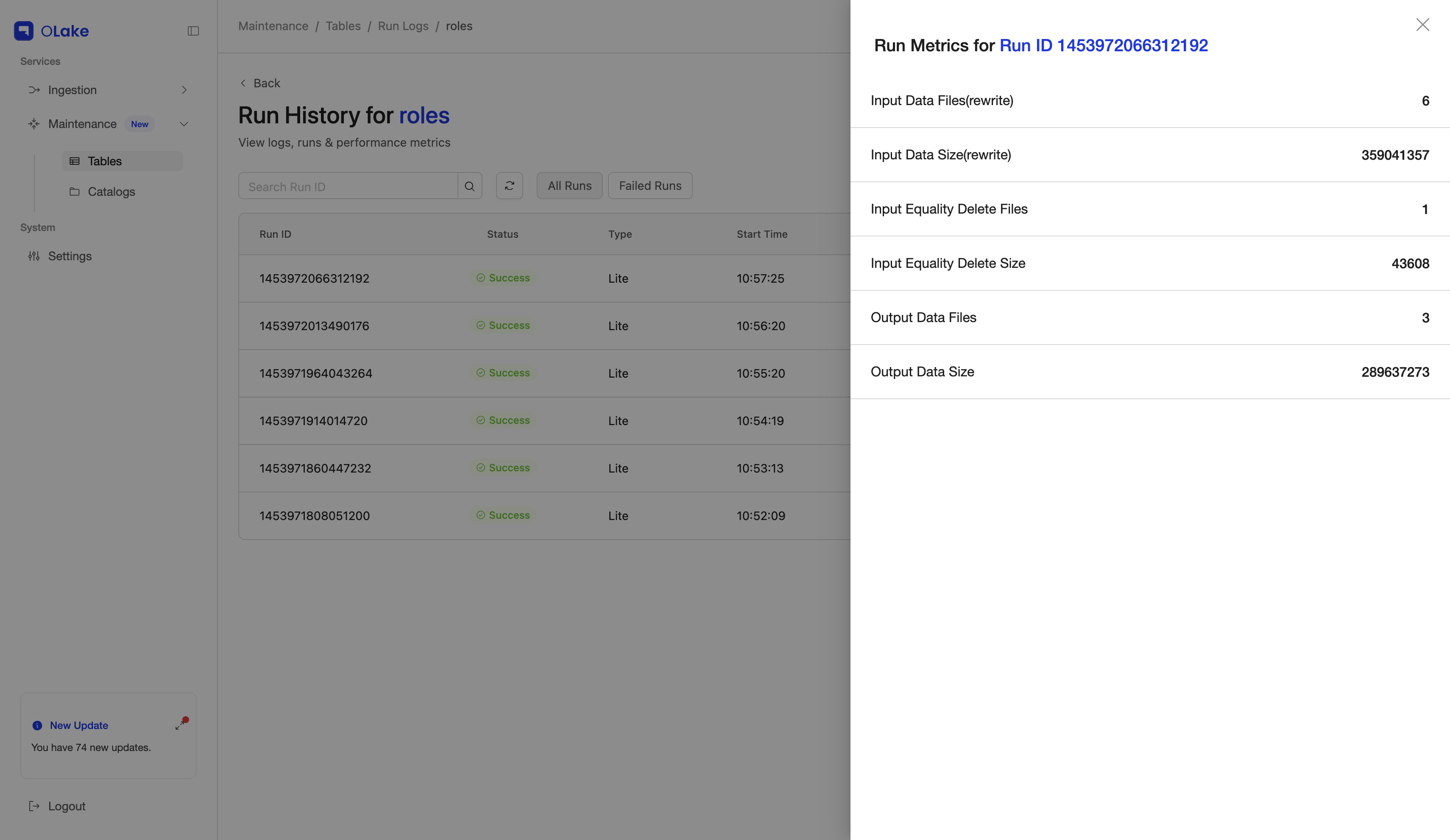

Run Metrics

Run Metrics describe what one optimization run did to the table: how many data and delete files went in, and how many data and delete files came out. Open them from the Runs page via the View Metrics action for the run you want to inspect.

These metrics help you see how much a specific run improved the table:

All size fields in Table metrics and Run metrics are shown in bytes.

| Metric | Description |

|---|---|

| Input data files | Count of input data files |

| Input data size | Total size of input data files |

| Input equality delete files | Count of input equality delete files (Count of equality delete files in the current snapshot * No.of sub-tasks) |

| Input equality delete size | Total size of input equality delete files |

| Input position delete files | Count of input position delete files (Count of position delete files in the current snapshot * No.of sub-tasks) |

| Input position delete size | Total size of input position delete files |

| Output data files | Count of output data files produced |

| Output data size | Total size of output data files produced |

| Output delete files | Count of output delete files produced |

| Output delete size | Total size of output delete files produced |

Interpreting Metrics

- A high Delete Files Count or very low Average File Size can indicate fragmentation and metadata overhead.

- After a successful optimization run, compare Input vs Output.

- A smaller Output delete size (and/or fewer Output delete files) typically indicates the run reduced delete-file fragmentation.

- Changes in Input/Output data file counts and data size show how much data was rewritten/compacted for that run’s scope.